Apple introduces groundbreaking accessibility features designed to aid individuals who have experienced speech loss, visual impairment, or blindness.

The well-known American tech firm Apple has unveiled a number of ground-breaking software innovations targeted at improving accessibility in a variety of fields. These amazing developments support those who have difficulties with their cognition, vision, hearing, or mobility, emphasizing Apple’s dedication to diversity.

Notably, Apple also releases cutting-edge technologies designed expressly to help people who cannot talk or who are in danger of losing their capacity to speak. Scheduled for release later this year, these remarkable features will make their way to Mac, iPhone, and iPad devices.

Have a Look at These New Accessibility Features

“At Apple, we’ve always believed that the best technology is technology built for everyone. Today, we’re excited to share incredible new features that build on our long history of making technology accessible, so that everyone has the opportunity to create, communicate, and do what they love,” said Apple CEO, Tim Cook.

“Accessibility is part of everything we do at Apple. These groundbreaking features were designed with feedback from members of disability communities every step of the way, to support a diverse set of users and help people connect in new ways,” said Sarah Herrlinger, Apple’s senior director of Global Accessibility Policy and Initiatives.

1. Personal Voice Advance Speech

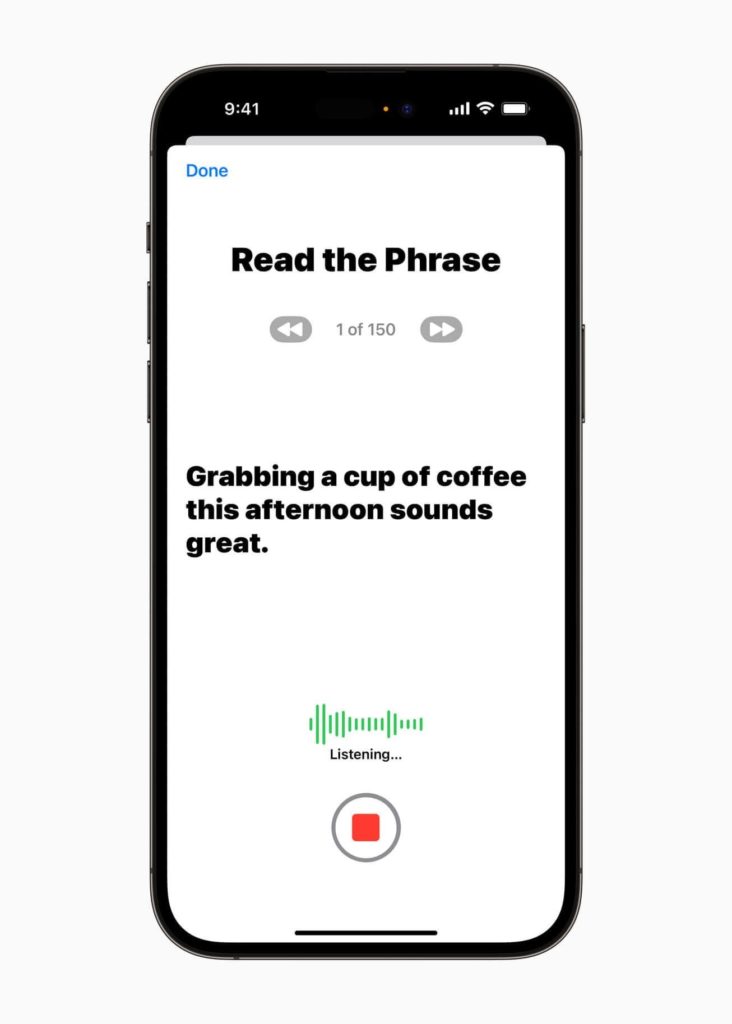

Among the array of impressive features, one stands out as particularly noteworthy: the Personal Voice. This ground-breaking tool was created expressly to help those who are in danger of losing their ability to talk, including those diagnosed with ALS (Amyotrophic Lateral Sclerosis) and other disorders of a similar nature.

With the aim of preserving their unique voice, this innovative feature allows users to communicate using their own distinct vocal identity through their iPhones. In a remarkable stride towards personalization, users now have the ability to create their own Personal Voice through a simple process.

By engaging with a randomized set of text prompts, individuals can record approximately 15 minutes of audio using their iPhone or iPad. What’s more, the integration with Live Speech functionality enables users to seamlessly communicate with their loved ones using their unique Personal Voice. This harmonious combination of technology promises to revolutionize the way individuals connect and express themselves.

Notably, at the moment Personal Voice is only accessible to English speakers and is exclusively created on hardware powered by Apple silicon chips.

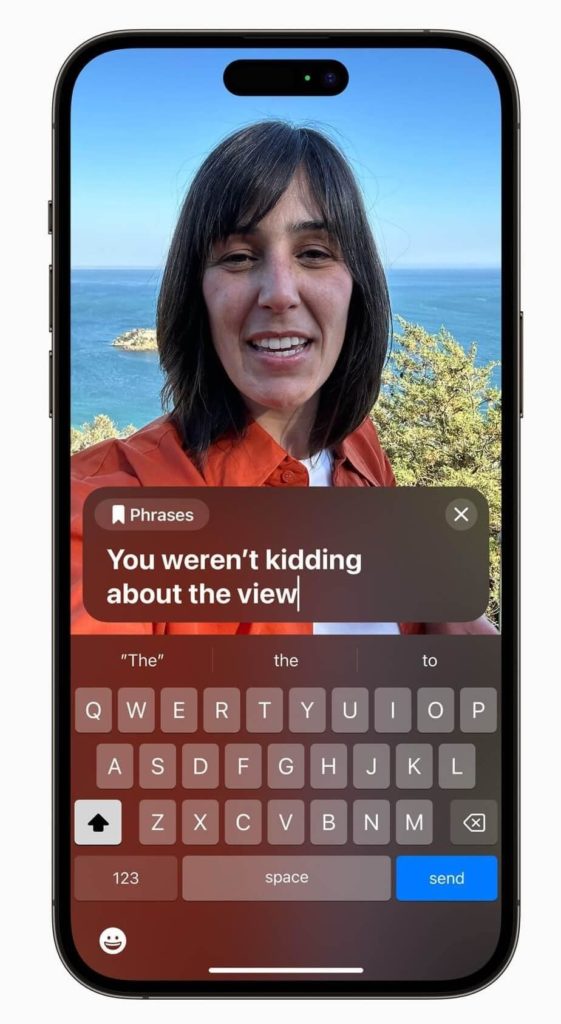

2. Live Speech

Apple’s Live Speech feature, accessible across iPhone, Mac, and iPad devices, introduces an extraordinary way for users to express themselves. This innovative functionality enables individuals to simply type their desired message, which is then instantly transformed into spoken words. Whether it’s during FaceTime conversations, phone calls, or even face-to-face interactions, this transformative functionality ensures that users can engage in seamless and natural communication.

In addition to its impressive features, users are granted the convenience of saving frequently used phrases for swift and effortless engagement in lively conversations with loved ones, colleagues, and friends.

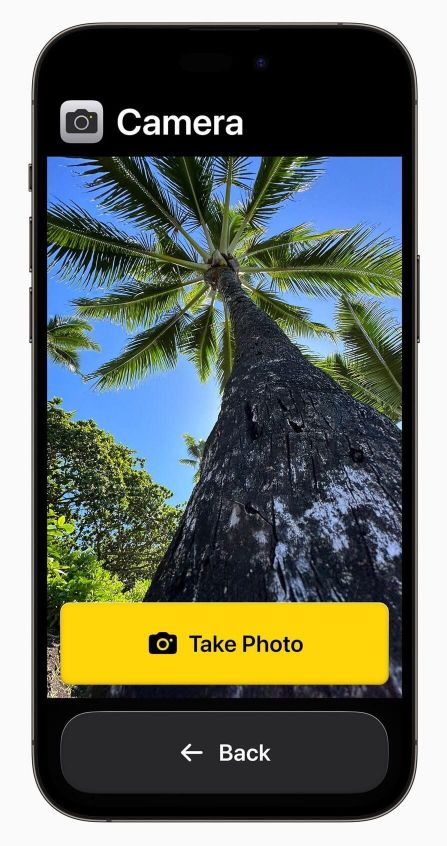

3. Assistive Access

Apple’s latest addition, Assistive Access, brings an array of benefits tailored specifically for users with cognitive disabilities. With a primary focus on enhancing user experience, this feature streamlines the interface of iPads and iPhones, making chatting, photo sharing, and music listening more accessible and intuitive. Notably, the Phone and FaceTime apps are seamlessly merged into a unified platform, simplifying communication processes.

Additionally, users are empowered with customization options that allow them to personalize the user interface according to their preferences, including contrast, text labels, icons, and more.

4. Point to Speak

With the introduction of Detection Mode in Magnifier, Apple presents an innovative accessibility feature called Point and Speak, which is specially made to help users who are visually impaired or have vision problems.

Point and Speak is a tool created to let people who are visually impaired, engage with real-world objects that feature text labels. This functionality utilizes the phone’s camera and LiDAR scanner to detect and identify text on objects, enabling users to obtain valuable information about their surroundings.

According to Apple’s official statement, their latest feature, Point and Speak, seamlessly integrates data from the camera, LiDAR Scanner, and on-device machine learning to provide an exceptional user experience. As individuals glide their fingers across the keypad, Point and Speak accurately recognizes the text on each button and promptly announces it aloud.

Apple’s Point and Speak feature is set to roll out on iPhone and iPad devices equipped with the LiDAR Scanner, offering a remarkable accessibility tool to users. Notably, Point and Speak will be available in a wide range of languages, including English, Italian, French, German, Chinese, Spanish, Portuguese, Korean, Japanese, Cantonese, and Ukrainian.

5. Voice Control

Enhancing the Voice Control feature, Apple introduces a noteworthy update that includes phonetic suggestions for text editing. This remarkable addition benefits users who rely on voice input for typing, enabling them to select the appropriate word from a list of homophones, such as “do,” “due,” and “dew,” which may sound alike but have different spellings and meanings.

Read Also: How to Modify Your Voice Feedback for Siri On iOS

Signing-Off

Apple has officially announced that a collection of new software features focusing on speech, cognitive, and vision accessibility will be introduced later this year. Although specific details regarding their release were not disclosed, it is highly anticipated that these features will make their debut during the forthcoming Worldwide Developers Conference (WWDC) scheduled to take place in early June.

For more of such latest tech news, listicles, troubleshooting guides, and tips & tricks related to Windows, Android, iOS, and macOS, follow us on Facebook, Instagram, Twitter, YouTube, and Pinterest.