Google conducted an AI event titled “Live From Paris” on 8th Feb 2023. At this event, senior Google employees announced the advancements of Google in the field of AI and how the world is going to experience major changes in the field of Search, Maps, Arts & Culture, and Travel.

Google celebrates its 25th anniversary this year. This event was the first event of the year 2023 and there were a few shortcomings that we have listed at the end of this article.

| The entire 40-minute event can still be viewed on YouTube by clicking this link.

Or Just read all that is important in 4 minutes. |

What Can We Expect From Google In The Near Future?

Google Bard

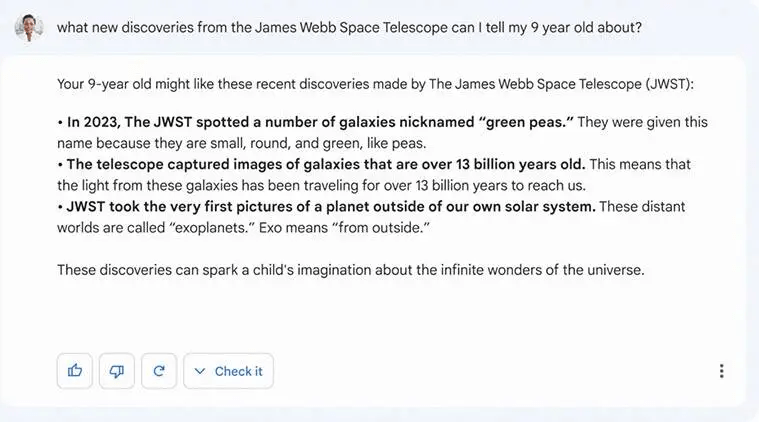

Google announced its new AI chatbot service called Bard which is already making ripples in the tech industry. It provides you with informative and pertinent replies to your inquiries, Bard makes use of the LaMDA (Language Model for Dialogue Applications) technology. Currently, just one response is produced per question by apps like Bard and ChatGPT.

Google has stated that a “lightweight” variant of the model is being made available in an early testing phase prior to being completely launched. The testing phase by internal and external testers has already begun. Many questions can have multiple possible solutions, therefore the new Google Search will provide both conventional search results and AI ChatBot-like responses.

Google Translate

Over a billion people around the globe use Google Translate across 133 languages. In the real world, displaced people from Ukraine have found this app to be a lifesaver as the refugees move into many different countries across Europe. Google has recently added 33 new languages (Corsican, Latin, Yiddish, etc) in the offline mode so that users can communicate without the internet.

A major new breakthrough in Google Translate app is that it will soon provide multiple meanings for words that are designed to be used with more than one context. This feature will begin with English, German, French, Japanese, and Spanish and more languages will display this feature soon. Take for example the word ‘Content’ can be used to feel happy and describe the material (text, video, audio)..

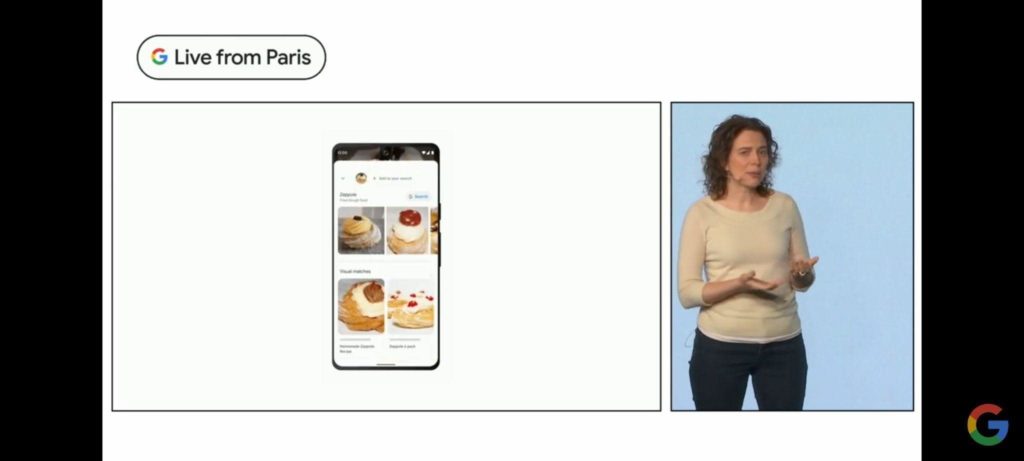

Lens Multisearch

Google users can check up on anything on the internet by taking a photograph of it or utilizing an image from their device’s photo bank. The Lens is used ten billion times each month which signals that visual search has moved from a novelty to reality. Users can look for specific elements in images or videos. Google Lens can recognize the structure by utilizing the capability to look up a building’s name in a video. This new Lens functionality will be made available in the future months.

One of the primary attractions of the event was Google Lens’ multisearch. Using both text and an image to search for something is the main idea. For instance, you can take a picture of a shirt and enter the color you want to buy it in. This feature has been implemented by Google across the world.

Multisearch Near Me

Google also demonstrated “multisearch near me,” which enables you to look for items like a specific meal or a specific item close by. Currently, it is only accessible in the US. Android users will soon be able to use Google Lens to do text and image searches without ever leaving the current screen.

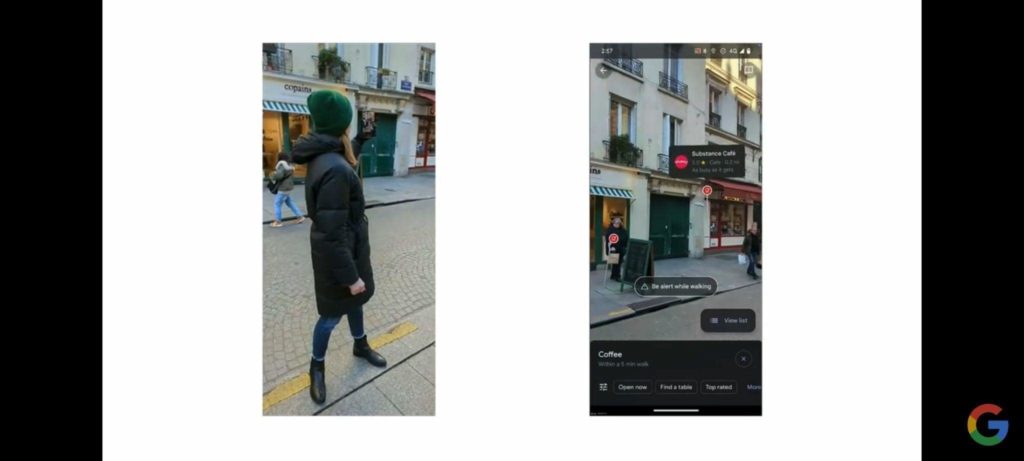

Immersive View

Immersive View, which was designed for landmarks only, is now being made available in five cities: London, Los Angeles, New York City, San Francisco, and Tokyo. Users can virtually fly over buildings to inspect entrances, traffic patterns, and even busy areas. They can access Google Maps to view directions and have their devices’ augmented reality arrows project directions onto actual locations.

Users can use the time slider to view how a location appears at various times. Users may even see what a location will look like at various times of the day and in different weather conditions, as well as when it would be less crowded. Users of Immersive View can even use the application to view cafes inside in order to scout out the place before visiting.

Users can also get a sense of how a location inside appears with an Immersive View before they arrive. Google does this with the aid of neural radiance fields (NeRF), an AI method that creates a 3D-rendered scene out of many still photos taken from various perspectives by mapping color and light. Walkthroughs that can be explored are made using NeRF.

Interior Live View, a comparable AR solution for indoor areas like airports and shopping malls, was also on display. It allows users to rapidly locate items like baggage claims, the closest elevator, and food courts. It is being introduced at 1,000 new locations, including malls, train stations, and airports.

EV Charging Filter

Google is also releasing some brand-new tools to support EV drivers. For quick travels, Google Maps will recommend charging stations, with a “very fast” filter. When you search for locations like hotels or supermarkets, charging stations will also be displayed (if available). These functions will become available for EVs with integrated Google Maps.

Google Arts & Culture

Google provided an update on Woolaroo, an AI-powered photo-translation tool that was introduced in May 2021. It aims to recognize things in images and provide users with their names in many endangered languages.

It now focused on using the instrument to preserve the contributions of women to science, among other things. The arts and culture team uncovered previously hidden accomplishments by female scientists by using AI to evaluate photographs and historical scientific records.

Google also employed AI algorithms to analyze well-known artworks, giving customers an AR experience that allowed them to view the artwork down to the last brushstroke using only their smartphones.

Something Spicy: A Few Shortcomings Of The “Live From Paris” AI Event By Google

All Speakers Were Fumbling Throughout The Event.

This may not be an important point in judging the success of an event, but all the speakers present in various sections of the events were fumbling throughout the event while speaking. It seemed that they were not ready for the event or the content for the event might have changed a bit after Microsoft’s Event about Bing integration with ChatGPT was held a day before.

Google Bard Was Not The Center Of Attraction Of the Event.

Most of us expected Google Bard to be the main point of discussion but surprisingly there was no new revelation about Bard. Google had announced certain information about Bard a few days before this event and the same was reiterated at the event.

The Testing Device Went Missing.

If you expect Google to conduct an event then it would probably be flawless. A few shortcomings can happen because things do not go the way you plan them every time. But a testing device went missing shows a lack of preparation for the event. Hence we missed the demonstration of multi search functionality. Probably that is why the 40-minute event rounded off in 38 minutes.

Google Bard May Not Be Accurate At All Times.

The AI ChatBot technology is pretty new and we can expect a degree of inaccuracies from Google Bard and its rivals. There is one example in particular, where the demo published by Google about Bard’s answers to a 9-year-old contains a bit of inaccurate information.

Nasa states that the last point is inaccurate as the first pictures of an exoplanet were taken by the Hubble Space Telescope. (Reference Article)

Your Thoughts On The “Live From Paris” AI Event By Google

The new projects undertaken by Google prove interesting and more attractive than Microsoft’s Bing integration with the ChatGPT event held yesterday. We have to wait some time before we can use all these AI-driven features and then observe for ourselves how AI will make our lives better than before.

Please let us know in the comments below if you have any questions or recommendations. We would be delighted to provide you with a resolution. We frequently publish advice, tricks, and solutions to common tech-related problems. You can also find us on Facebook, Twitter, YouTube, Instagram, Flipboard, and Pinterest.

Subscribe Now & Never Miss The Latest Tech Updates!

Subscribe Now & Never Miss The Latest Tech Updates!